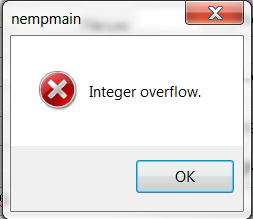

Our Partdtlseq is at 25 million after 11 years. That seems like an insanely high number of records. Surely others would have run into this before us, right? I mean, we’re pretty small company (although maybe it’s something to do with our BOM structures, or how we run MRP so heavy every night and re-create some 12k jobs?).Could I have changed the column type to BIGINT and been okay, or would that have just broken application server layer of Epicor?.Did I just introduce some other issue we haven’t stumbled upon yet, but is going to bite us soon?.Is this a design problem or bug? Should I report it?.affect Time Phased Inquiry) back up and running by resetting the PartDtlSeq IDENTITY back to 1: So I got our Epicor, MRP, Job Creation, Order Creation, PO creation (anything that would insert a PartDtl record, i.e.

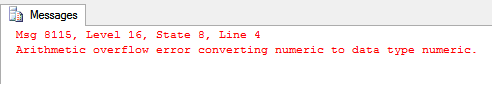

I also observed there is so much churn in this table (we only have about 1.1 Million rows in there currently) that the smallest PartDtlSeq currently for us (other than a few anomalies I can probably just delete) was at about ~1.6 or 1.7 Billion. Apparently this has an IDENTITY (auto-incrementing) integer column called PartDtlSeq and since we churn a lot every night in our MRP runs, we managed to hit the ~2.14 Billion limit of integers. I looked into it, and discovered that I couldn’t even create/save a job, and the root cause was a SQL DB error:Īrithmetic overflow error converting IDENTITY to data type intĪnd the SQL query that was throwing this was an INSERT statement trying to create a row in the Erp.PartDtl table. I’ll try to keep this brief - this weekend, MRP stopped working. enabled = false > SELECT arg1 * arg2 FROM VALUES ( 100 Y, 100 Y ), ( 20 Y, 5 Y ) AS t ( arg1, arg2 ) 16 100 > SET spark. > SET ANSI_MODE = false > SELECT arg1 * arg2 FROM VALUES ( 100 Y, 100 Y ), ( 20 Y, 5 Y ) AS t ( arg1, arg2 ) 16 100 > SET ANSI_MODE = true - In Databricks Runtime temporarily disable ANSI mode to tolerate incorrect overflow. Allowing overflows to be treated as NULL > SELECT try_multiply ( arg1, arg2 ) FROM VALUES ( 100 Y, 100 Y ), ( 20 Y, 5 Y ) AS t ( arg1, arg2 ) NULL 100 - In Databricks SQL temporarily disable ANSI mode to tolerate incorrect overflow. If necessary set ansi_mode to "false" ( except for ANSI interval type ) to bypass this error. 0 - An occasional overfklow that should be tolerated > SELECT arg1 * arg2 FROM VALUES ( 100 Y, 100 Y ), ( 20 Y, 5 Y ) AS t ( arg1, arg2 ) 100 S * 100 S caused overflow. Rewrite the expression > SELECT 100 Y / 5 * 10 Y 200. enabled to "false" ( except for ANSI interval type ) to bypass this error. Use a wider numeric to perform the operation by casting one of the operands > SELECT 100 Y * cast ( 100 Y AS INTEGER ) 10000 - An overflow of a complex expression which can be rewritten > SELECT 100 Y * 10 Y / 5 100 S * 10 S caused overflow. An overflow of a small numeric > SELECT 100 Y * 100 Y 100 S * 100 S caused overflow.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed